While modern technology, particularly artificial intelligence (AI), has made life easier for humanity, we can no longer ignore its toll on the environment. Indeed, AI alone accounted for over 625,000lbs of carbon dioxide emissions in 2019 – and the level of power consumption among newer iterations of the technology is growing rapidly.

But a recent article published in the research journal Nature Communications offers a more sustainable solution to this developmental challenge: quantum tunneling.

Building smarter, cleaner AIs

According to Shantanu Chakrabartty, Clifford W. Murphy Professor at the McKelvey School of Engineering at Washington University in St. Louis, MO, and primary author of the study, training an AI uses up too much energy. As a result, it generates a great deal of heat and requires so much water to be kept at a functional temperature.

As Chakrabartty puts it, it is akin to boiling a lake just to set up a small neural network.

Quantum tunneling enables developers to use electrons to conserve energy in the process of AI training.

In the process formulated by Chakrabartty and his team, developers use the natural action of electrons to train artificial intelligence. When solutions to problems are set in a stable state, electrons gravitate toward the correct solution with minimal assistance from any algorithms coded into the training program. Furthermore, electrons will naturally follow the fastest and most energy-efficient route toward the correct answer when this happens.

It is a stark contrast and a more refined approach to AI training than the brute force methods employed by most developers. An AI’s route is recorded step by step and uses considerable energy whenever switches are turned on or off. The quantum tunneling approach is, thus, more energy-efficient than “force-feeding” a regulated amount of power into an AI’s memory array.

A viable solution

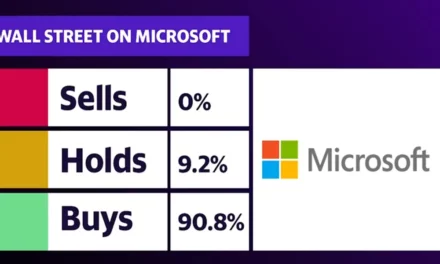

Because conventional AI training and development requires so much power, many companies are hard-pressed to train new AIs from the ground up.

As a result, they train it just enough to be functional and then make tweaks for various applications. On the other hand, Larger companies will simply move their data centers closer to a big enough water source for cooling.

Quantum tunneling may soon make both approaches obsolete as its “learning in memory” approach uses far less energy and more efficiently fixes vital concepts into an AU’s memory.